Docker Management and Kubernetes Orchestration

Navigate the container world with Azure examples

Today I had the extreme pleasure of presenting at an Atlanta-based Java conference called DevNexus and again at the Google Developer User Group in Atlanta on June 19th, 2018. The DevNexus conference regularly sells out and had 1,800 attendees this year.

Although my two live presentations weren’t recorded, I did have the opportunity to stop by Channel 9 studios in Warsaw, Poland.

Hello @ch9 #warsaw #poland! About to record a presentation about #docker and #azure pic.twitter.com/X4GHS2qd5o

— Jeremy Likness ⚡️ (@jeremylikness) April 26, 2018

To view the deck, scroll down later in this article or download the full PowerPoint presentation ⬇ here.

The Dockerfile definitions and full source code from the presentation are available on GitHub here. I was really amazed at the energy of the audience and the size of the crowd. I captured my trademark 360 degree picture about 5 minutes before the talk started, and it was standing room only by the time I finished. My loosely conducted survey revealed most of the audience was new to using Docker in production and not very familiar with Kubernetes.

I started with a gentle introduction to Docker’s capabilities by highlighting a browser I used when I discovered the Internet in 1994. The browser, named Lynx, was text-only. It is still maintained today and easy to run in a Docker container. After you install Docker, simply type:

docker run -it --name lynx jess/lynx

This will download the image and launch the browser. Take a look at an accessible site like DevNexus.com, then see what a Single Page Application looks like by navigating to angular.io. We got a good laugh at the, shall we say, “minimalist” result. (Sorry, you’ll have to try it yourself).

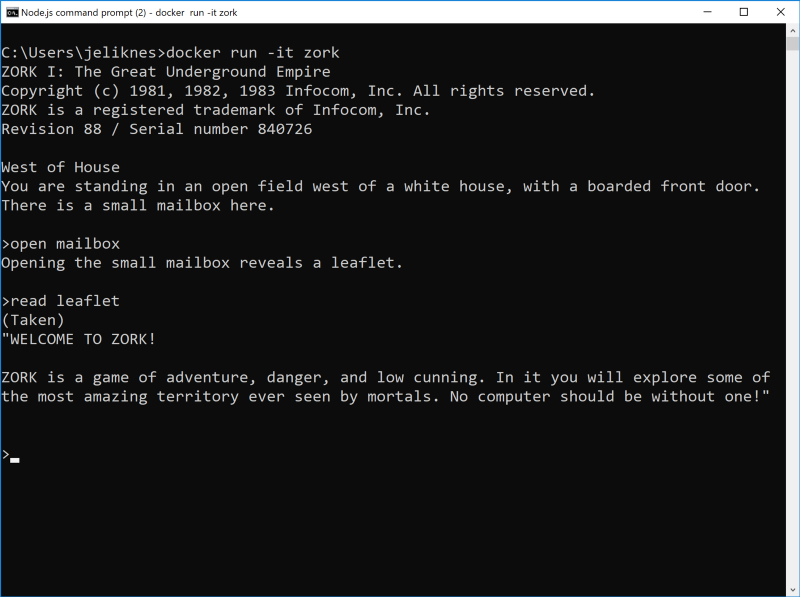

Next, I talked about a revolutionary virtual machine that was created almost 40 years ago (yes, you read that right) in 1979 called the Z-machine. This innovative machine was created by college graduates migrating a popular adventure game from a mainframe to personal computers. They created it so they could create a single implementation of the Z-machine for each of the non-compatible personal computers available at the time, ranging from TRS-80 and Commodore 64 to Spectrum, Apple and Atari. They could then write a game once to the Z-machine specification and run it everywhere. This is a perfect scenario to illustrate multi-stage builds.

The Zork Dockerfile grabs a Z-machine implemented in Go, uses a Docker container with the Go development environment pre-installed to compile it, then creates an image with the Zork game file that is playable.

FROM golang:alpine as build-env

RUN mkdir /src

ADD http://msinilo.pl/download/zmachine.go /src

RUN cd /src && go build -o goapp

FROM alpine

RUN mkdir /app

WORKDIR /app

COPY --from=build-env /src/goapp /app

ADD https://github.com/visnup/frotz/blob/master/lib/ZORK1.DAT?raw=true /app/zork1.dat

ENTRYPOINT ./goappWe even played a few rounds.

To put things in perspective, the Zork image ends up at around six megabytes in size. The game was originally able to run on machines with only 64 kilobytes of memory.

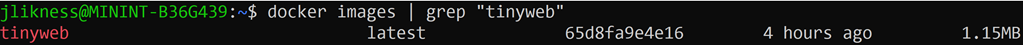

Of course, the ultimate goal is to create your own application. I wrote an HTML file on the fly, used a tiny busyboximage to host it on a web server, then built and ran this Dockerfile:

FROM busybox:latest

RUN mkdir /www

COPY index.html /www

EXPOSE 80

CMD ["httpd", "-f", "-p", "80", "-h", "/www"]This created an extremely small image:

Running it locally is fine, but the enterprise demands more. First, it is important to have a secured private registry to host custom images when proprietary software is involved. Second, the images need to be deployed somewhere that is accessible to end users. I demonstrated how to create a private Azure Container Registry from the command line:

Next, I deployed it to the web from the private repository with a single command using Azure Container Instances:

I also shared how to leverage Azure App Service’s Web App for Containers to create a solution that scales both up and out:

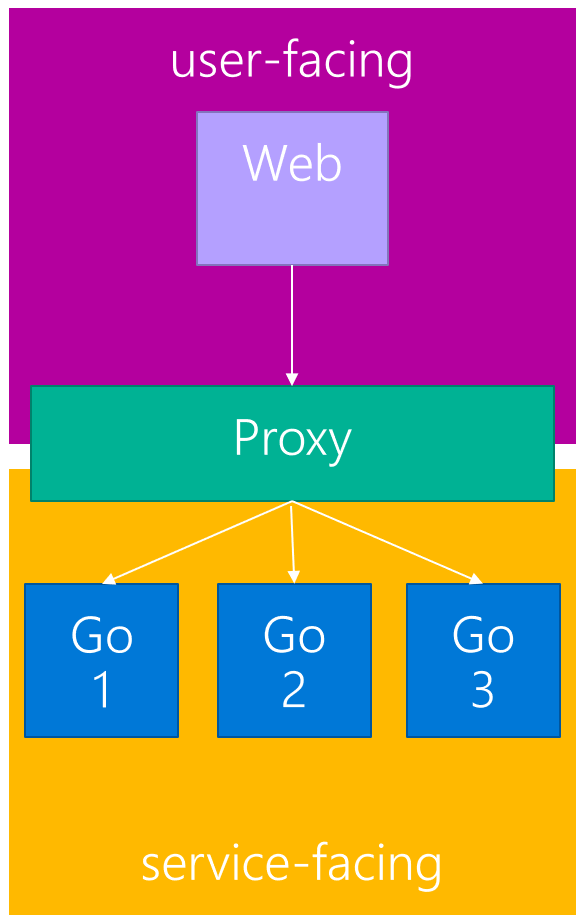

Running single containers is interesting, but production applications usually involve multiple containers. I wrote a simple application to illustrate how to scale backend services using Docker Compose.

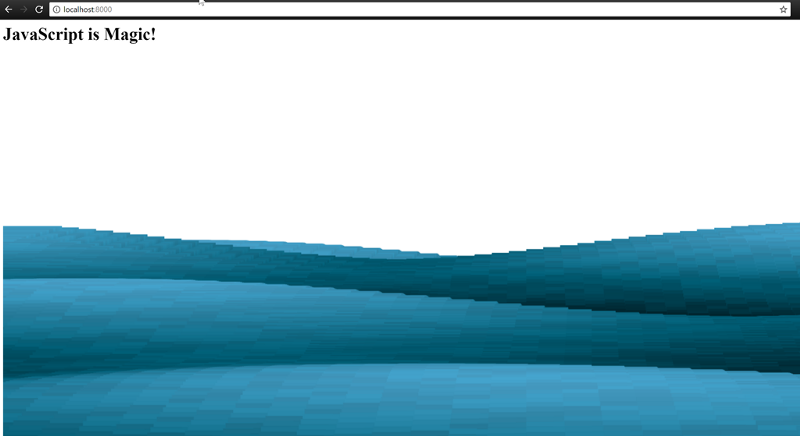

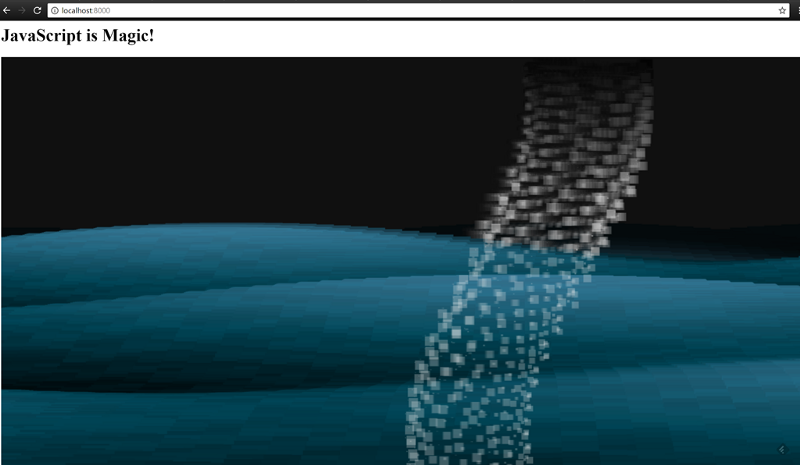

The service backplane is made up of containers that run a small Go application that serves one of three JavaScript snippets from Dwitter. The front-end is a web app that executes the code on an interval. The result literally visualizes the scale operations. Here is the compose file:

version: '2'

networks:

user-facing:

driver: bridge

service-facing:

driver: bridge

services:

webserver:

image: webserver

build: ./webserver

ports:

- 8000:80

networks:

- user-facing

goservice:

image: goservice

build: ./goservice

ports:

- 8080

networks:

- service-facing

proxy:

image: dockercloud/haproxy

volumes:

- /var/run/docker.sock:/var/run/docker.sock

ports:

- 8080:80

links:

- goservice

networks:

- user-facing

- service-facingRunning a single backend instance results in this:

Scaling out, however, results in multiple snippets running, and renders something like this:

Running compose is fine, but doesn’t provide resiliency beyond a single host. This is where orchestration comes into play. Orchestration platforms provide features including:

- Resiliency

- Scale out

- Load-balancing

- Service discovery

- Roll-out and roll-back (canary and green/blue deployments)

- Secret and configuration management

- Storage management

- Batch job control

The de facto orchestration platform today is Kubernetes. I ended the presentation by walking through deploying the application to the Azure cloud using Azure Kubernetes Services.

Here is the full deck I presented from.

View the presentation on Slideshare.net

You can access the Dockerfile definitions and source code here.

According to Google’s Kelsey Hightower in his DevNexus keynote, containers are the future.

Watching @kelseyhightower "The future of the #cloud will be containerized" #Keynote @devnexus #Devnexus pic.twitter.com/6vDc7j8PDV

— Jeremy Likness ⚡️ (@jeremylikness) February 23, 2018

He just may be right.

Regards,

Related articles:

- Azure Cosmos DB With EF Core on Blazor Server (Azure)

- Azure Event Grid: The Whole Story (Cloud)

- Deep Data Dive with Kusto for Azure Data Explorer and Log Analytics (Azure)

- Herding Cattle with the Azure Container Service (ACS) (Kubernetes)

- Introduction to Cloud Storage for Developers (Cloud)

- Music City Code 2017 (Kubernetes)